🎯 Before You Hit Send: How AI Changes Decision Confidence in Professional Communication

Before You Hit Send

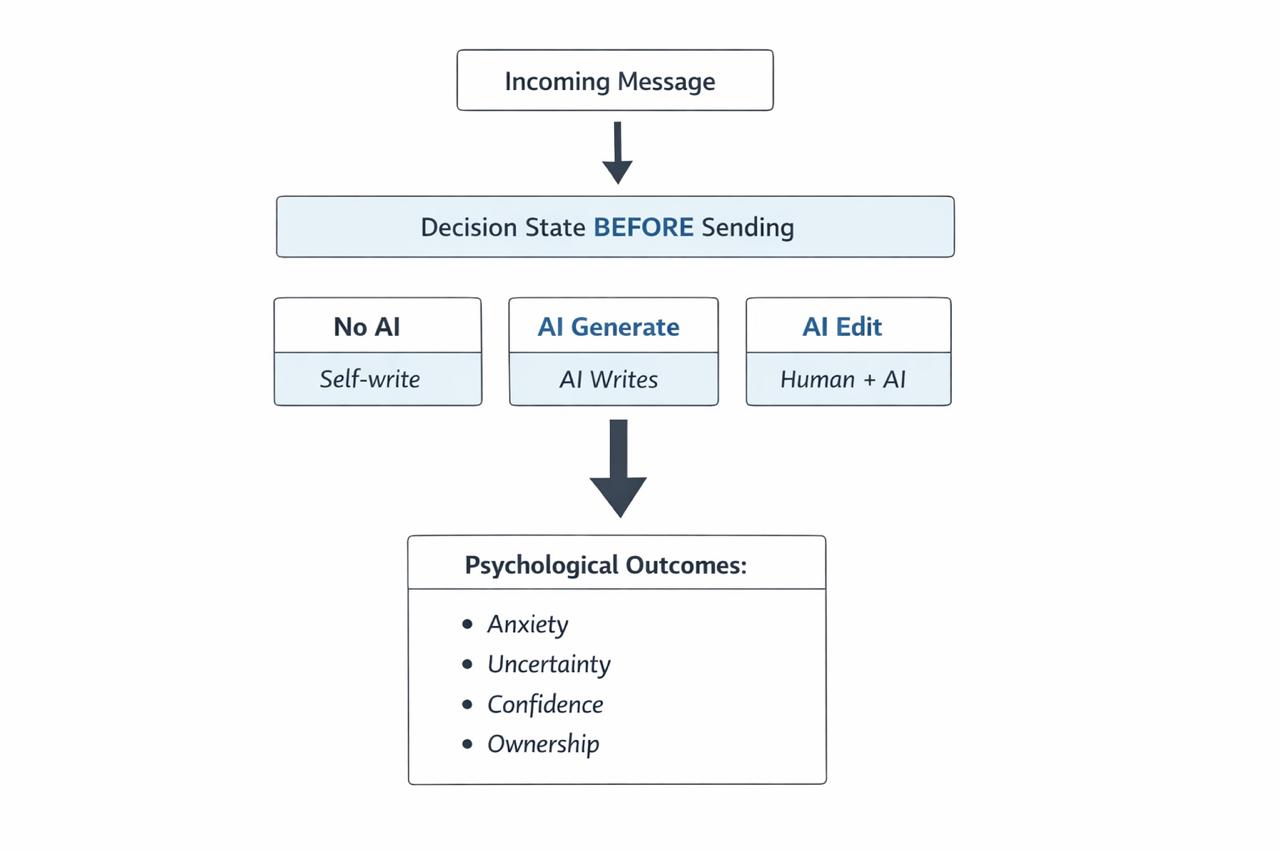

A controlled, scenario-based experiment that isolates how AI is used (generate vs edit) and measures its impact on the decision state right before sending—the moment where users choose to send, revise again, or abandon.

Research question

How does AI assistance change users’ decision state immediately before professional communication—and does that effect depend on how AI is used?

Why this is evaluative

Messaging copilots are often evaluated by text quality, but user behavior is driven by pre-send friction and perceived risk. This study operationalizes “helpfulness” as measurable shifts in decision state that predict sending behavior.

Conceptual model

Experimental design

Baseline authorship. Participants write the reply themselves.

AI as external authority. Participants evaluate a fully AI-written reply they are asked to send without editing.

Human + AI collaboration. Participants draft a reply, use AI to revise, then evaluate the final version.

Scenarios

Participants respond to multiple realistic academic/workplace prompts (e.g., advisor criticism, co-author edits, disagreement, follow-up requests). Scenarios are designed to be high-stakes but common, where social risk is salient.

Measures (metrics-first)

- Anxiety — social pressure / fear of negative evaluation

- Uncertainty — “Is this reply appropriate?”

- Confidence — “I’m ready to send”

- Ownership — “This sounds like something I would genuinely say”

- T1: immediately after reading the incoming message (initial reaction)

- T2: right before sending (decision state after writing / AI assistance)

Analysis plan (A/B mindset)

What this enables for product teams

Decide whether the product should default to generate (speed/confidence) or edit (ownership/control).

Make authorship legible: “your draft + AI polish,” diff views, or editable “tone controls” to protect ownership.

Connect psych metrics to behavioral KPIs: edit cycles, time-to-send, abandonment, and user-reported trust.